As AI inference becomes the operational backbone of applications, a new tension is rising between innovation and infrastructure.

At GITEX 2025 in Dubai, discussions on stage and at the show floor throughout the event centered on the challenge of scaling AI while balancing performance, data sovereignty, and cost.

Over five days, the event brought together the biggest names in tech and business, with many openly discussing new ways to maintain real-time intelligence as the cost of computation continues to soar.

Key Takeaways

GITEX 2025 shifted focus from AI training to large-scale AI model inferencing efficiency.

Nations are pursuing sovereign AI infrastructure to reduce their dependency on costly GPU supply chains.

Open-source hardware and software drive autonomy in global AI inferencing strategies.

Hybrid quantum systems could complement GPUs for faster, smarter AI inference.

Edge inferencing and multi-tenancy accelerate real-time AI without centralized data reliance.

Rethinking the Economics of Intelligence

Sam Altman, CEO of OpenAI, spoke virtually at the event with Peng Xiao, Group CEO of G42, about the concept of "AI-native societies." Altman's message was that the challenge ahead is not intelligence itself but access to it.

Altman said:

"The best way to prevent inequality from deepening in the AI era is to make intelligence abundant and cheap."

His view reframes what inference is in AI, not merely as a technical question but as a societal one about how nations distribute intelligence as a shared resource.

The UAE's model, through initiatives like G42's "AI for Countries," treats inference capacity as a public good. The goal is to ensure that citizens, institutions, and enterprises alike can draw upon shared computational power.

In a world where the most valuable resource is no longer oil or data but the capacity to process information, the infrastructure behind AI inference has become both an economic and geopolitical asset.

Open Source & the Path to Autonomy

Few remarks at GITEX resonated as strongly as those from Jim Keller, CEO of Tenstorrent. In his address, "Taking Control of Your Sovereign AI Future," Keller urged nations and enterprises to break their dependence on proprietary hardware and software stacks.

Keller said:

"Open source is a path for innovation, but also a path to really own it. Imagine a world where you could own the AI IP, CPU tech, and open-source software. You just created a world where anybody could build an AI solution."

Keller's point reflects a growing realization across the AI model inference ecosystem. Hardware supply constraints are slowing innovation. With high-end GPUs in constant shortage and prices driven upward by data center demand, open-source alternatives such as RISC-V-based accelerators and modular inference platforms offer a route to autonomy.

This vision aligns with G42's theme of "Building AI-Native Nations." Just as the internet era depended on open standards to scale, the next phase of AI inferencing relies on democratized hardware and software architectures that reduce vendor lock-in and enable collaboration across borders.

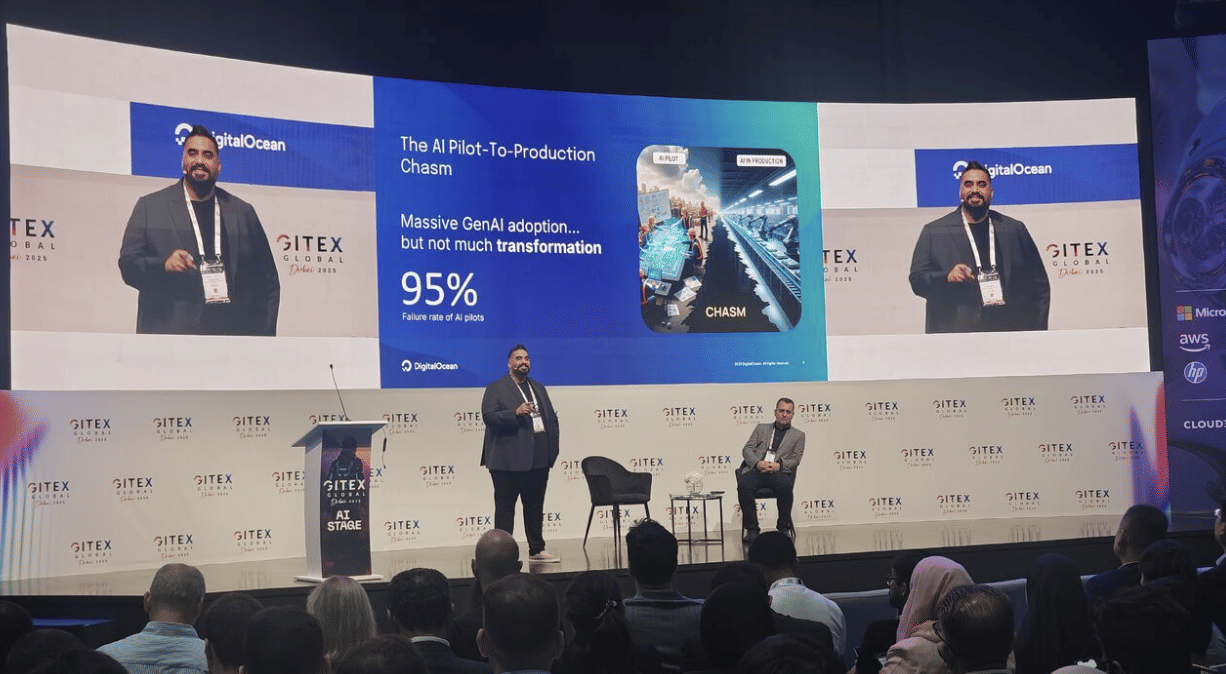

Suhaib Zaheer, SVP & GM, Managed Hosting at DigitalOcean, joined me on the AI stage and later shared how they are seeing this trend and actively addressing the issue.

Zaheer said:

“AI innovation should not be limited by cost or access. Our goal is to give builders everywhere the ability to run mission-critical AI applications efficiently and predictably. With GPU instances starting at under a dollar per hour, serverless inference that charges only for tokens used, and transparent pricing that avoids idle costs or hidden fees, we’re redefining what affordable performance looks like.”

https://infogram.com/ai-inference-price-drops-over-time-20222024-1h9j6q7wqemxv4g

He went on to add:

“Through GradientAI, developers can go from prototype to production in minutes, using open frameworks and flexible deployment models that keep ownership and control in their hands.

“Whether they’re scaling an LLM or serving millions of requests a day, builders across the world can now run high-performance AI workloads with the same simplicity and cost-efficiency that made DigitalOcean a trusted name in cloud.”

Zaheer explained that this price-to-performance advantage is a key reason customers are turning to DigitalOcean. On average, he noted, clients experience around a 30% lower total cost of ownership compared with major hyperscalers and competitors.

He said:

"We're opening up access for folks who are just getting started, folks who have smaller businesses, and they're just getting going. So the same total cost of ownership gains that people have gotten through our cloud tech, they will also get through our AI platforms as well, including for inferencing."

Quantum & the New Architecture of Compute

While most discussions centered on GPUs, Ana Paula Assis, SVP and Chair of IBM EMEA and Growth Markets, challenged the industry to think beyond classical compute. She described how hybrid quantum architectures could reshape AI inference by executing multiple operations simultaneously.

Assis said:

"With quantum architecture, we can parallelize many operations to run at the same time, so innovators can create solutions today that a classical computer would take years to achieve."

This observation highlighted an often-overlooked opportunity. As AI models grow in complexity, hybrid architectures that combine CPUs, GPUs, and quantum accelerators could offer a more sustainable path forward.

In theory, this integration could also redefine the best processors for edge AI inferencing. This would enable energy-efficient chips to complement large-scale data-center infrastructure.

The Bottom Line

The mood at GITEX Global this year was pragmatic rather than speculative. From Altman's call to make intelligence "abundant and cheap" to Keller's advocacy for open hardware, the consensus was clear. AI inference will define the next decade of technological and economic growth.

To achieve this, nations and enterprises must address three converging challenges:

The hardware bottleneck created by GPU scarcity and energy cost.

The governance challenge of balancing sovereignty with global collaboration.

The human imperative to ensure that AI's benefits advance society as a whole.

Dubai's hosting of GITEX was symbolic of this intersection between ambition and capability. Its AI-dominated halls, sovereign cloud launches, and quantum showcases represented a new frontier. If the event demonstrated anything, it is that AI inference is no longer a back-end process but the architecture of a new intelligence economy.

FAQs

What is AI Inferencing?

AI inferencing is the process of using a trained AI model to make predictions, decisions, or classifications on new, unseen data in real-time.

What is the difference between AI inferencing and training?

AI training refers to the process of teaching a model to make predictions by analyzing large datasets. But AI inferencing refers to the process of using a trained model on new data in real-time to produce results quickly and efficiently.

What are the two basic types of inferences in AI?

The first is logical inference (the process of coming to conclusions based on known or given relationships, or sets of rules), while the second is statistical inference (the process of coming to conclusions based on the use of probability to predict outcomes based on existing data).