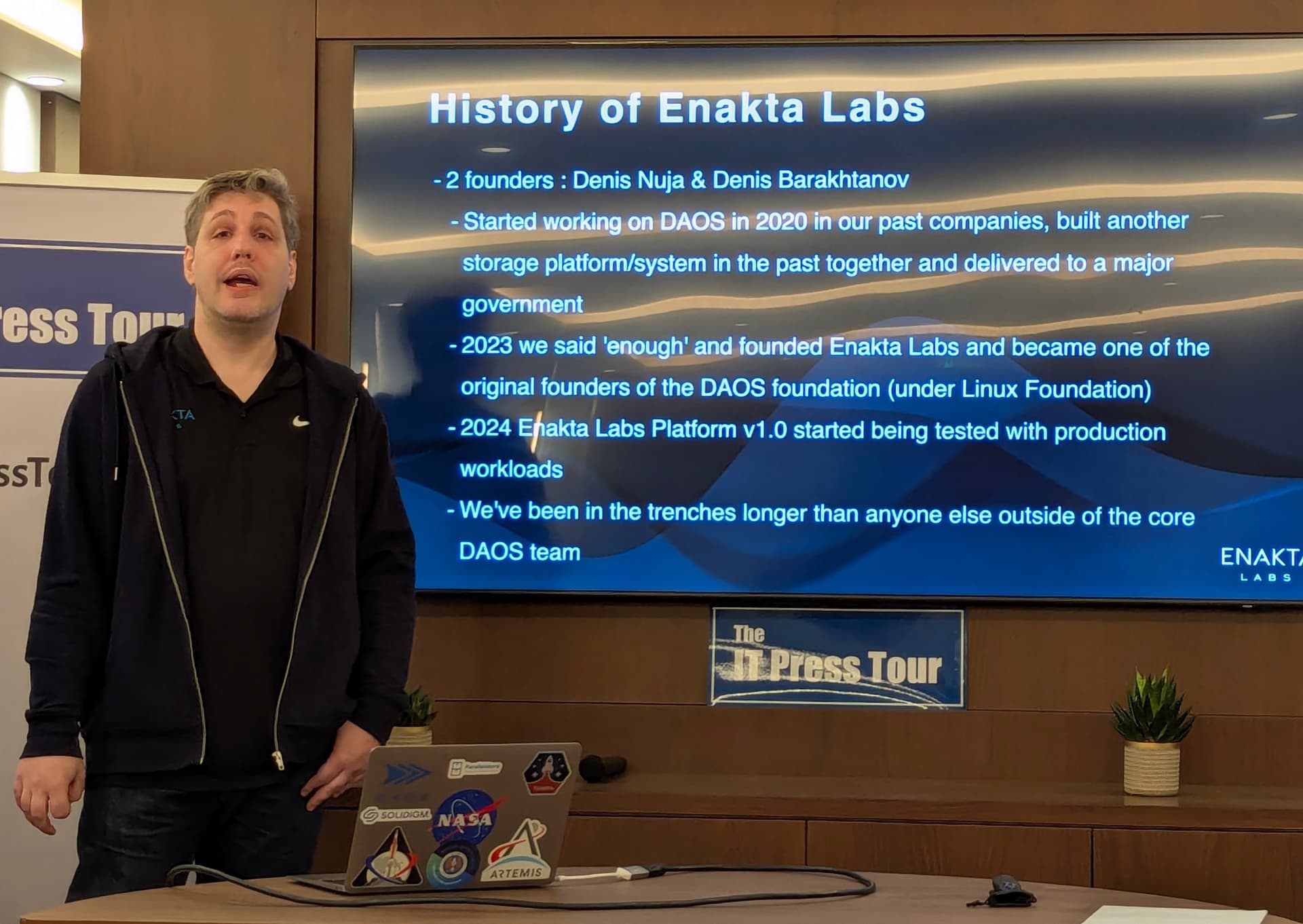

Can a storage system built for the world’s fastest compute environments also become the blueprint for a new generation of enterprise AI infrastructure? That question kept coming up in Athens during the 65th IT Press Tour, where I met the co-founder of Enakta Labs.

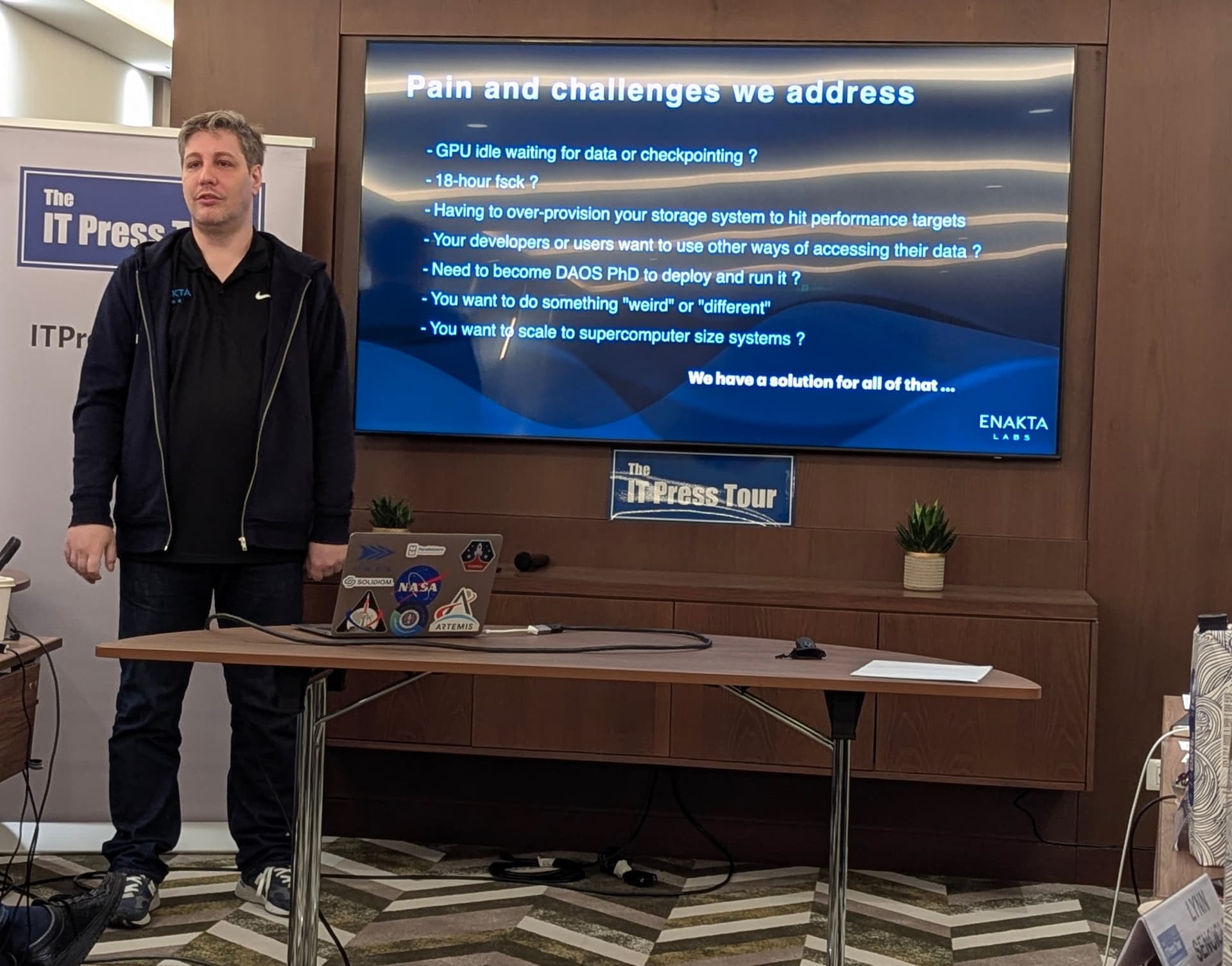

The storage industry is facing two pressures at once the the first being scale. Data volumes are increasing rapidly due to AI. Then there are compliance retention, sensor fleets, media archives, and research workloads. The second is complexity. Distributed storage systems promise linear performance but seldom deliver it without intervention, tuning, and expensive over-provisioning.

Most people reading this will have already experienced configuration sprawl, node drift and backups remaining connected allowing ransomware to get to them first. When recovery is finally needed, the data you assumed would save you is gone.

This gap between promise and reality is where Enakta Labs has positioned itself. Not as a backup vendor, not as another cloud storage layer, but as an orchestrator and commercial steward of the DAOS ecosystem, making it usable, scalable, and recoverable in real environments.

Denis Nuja has been through these experiences many times and has the deployment scars to prove it.

What Is DAOS, and Why Was It Left Behind?

DAOS (Distributed Asynchronous Object Store) is a parallel file system born from Intel’s Optane era. When Optane faded, DAOS lost its parent, its budgets, and most of its engineering headcount. But it did not lose its credibility.

In 2023, the Aurora supercomputer, running DAOS in Optane mode, won the IO500 Production Overall Score, delivering 1.3 TB/s of bandwidth. After Optane’s demise, DAOS was re-architected to use SSDs for metadata, with performance remaining essentially unchanged.

The challenge is not that DAOS lacks performance; the challenge is that it lacks adoption, marketing, and a commercial home. It is an orphan child fighting for attention against Storage Scale, Lustre, BeeGFS, Quobyte, PanFS, NetApp, Dell, HPE, Pure Storage, WEKA, and VAST Data.

The enterprise AI era has brought these systems into direct contention, high-bandwidth file and object feeds to GPU clusters. Everyone is racing to support AI workloads. DAOS is racing without a PR budget, a large support org, or brand recognition. This is why Enakta Labs stepped in.

How Enakta Labs Solves Real Storage Problems

One of the most enormous inefficiencies in AI environments is GPU idle time. Research shows that AI clusters can lose 20% or more of training hours waiting for data. Then there is the capacity mismatch.

Many organizations deploy 4PB when they only need 1PB to hit performance targets. The rest sits unused, powered, cooled, depreciated, and billed for years. Enakta Labs is tackling this by maximizing throughput with off-the-shelf NVMe drives and high-bandwidth networking, rather than relying on custom hardware SKUs or proprietary memory fabrics.

Enakta's IO500 performance tests in 2025 showed 270+ GB/s from a single client using 8x400 NICs, without RDMA acceleration, outperforming many multi-client global deployments.

Their design choices are deliberate. They PXE boot the entire cluster from an immutable in-memory OS image based on SLES, containerize storage services using Podman instead of Kubernetes, and deploy clusters of a few hundred nodes in hours, not weeks, assuming hardware and networking behave correctly, which they admit is often the real bottleneck.

Enakta has built its own job scheduler and cluster-wide namespace system, because existing tools could not deliver the orchestration required for clusters scaling toward supercomputer size.

Why You Should Care

Offline recovery is no longer an edge case. It is becoming a governance requirement, a resilience mandate, and a commercial opportunity. Connected backups fail first because attackers go there first. Incremental storage improvements will not fix this. The only copy that survives the crash is the offline copy.

Enakta Labs is reviving an orphan system that already proved its performance. Now they are proving its usability, orchestration, and recovery certainty at scale, without tuning, without proprietary dependencies, and without the illusion that online backups will always save you.

I’ll be inviting the founder onto a future podcast episode. What questions would you like me to ask them next?

Share your thoughts, and I’ll take them straight into the conversation.