When Jason Lohrey founded Arcitecta in Melbourne in 1998, the term “data gravity” had not yet entered the enterprise vocabulary. Two decades later, that phrase defines one of the most complex challenges in digital infrastructure. But Lohrey and his team are asking a different question altogether: what if the direction of gravity has been misunderstood?

At the company’s session during the IT Press Tour in New York, Lohrey joined virtually from Australia to share his view on the evolving connection between data, energy, and geography. He argued that as the world faces shortages of both power and water, enterprises may need to bring data to where the compute and water are, rather than the other way around.

It is a simple yet far-reaching idea that reframes how Arcitecta views the next era of infrastructure, not as a competition between cloud and on-premises environments, but as a balance between sustainability, sovereignty, and human purpose.

The Company That Joins by People, Not Capacity

Lohrey describes Arcitecta as “a data company that enables people to do cool things with data.” As COO Graham Beasley explained during the same session, Arcitecta is different because people, not capacity, join it. That mindset represents both a cultural and a technical decision.

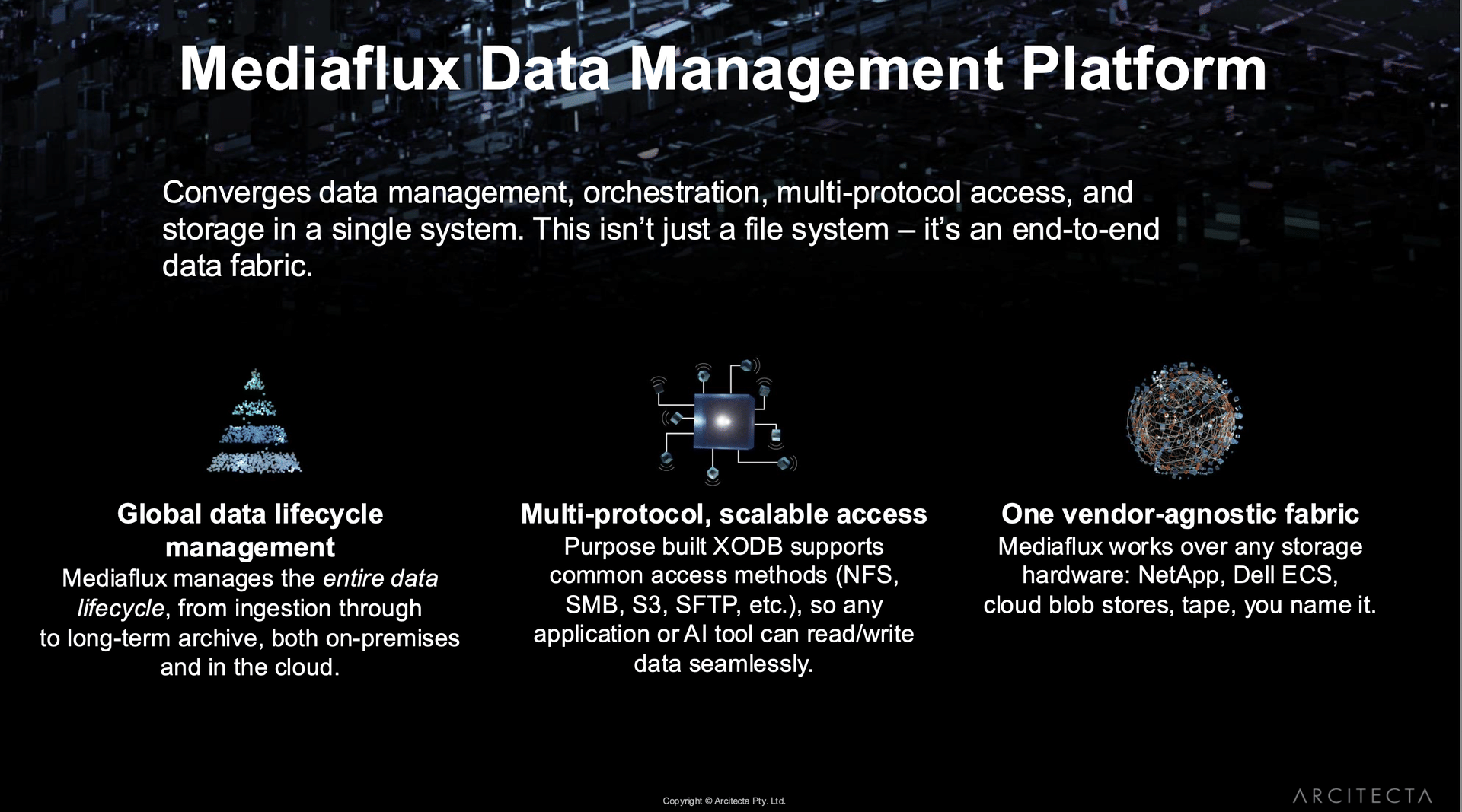

This belief has guided Arcitecta’s flagship product, Mediaflux, a data management platform used across research, government, and cultural institutions in Australia, the United States, and Europe. The platform manages everything from active, high-performance workloads to long-term archives and tape libraries, uniting them under a single metadata-driven layer. From the outside, it may appear to be one more player in the unstructured data management market, but its foundation is human-centered.

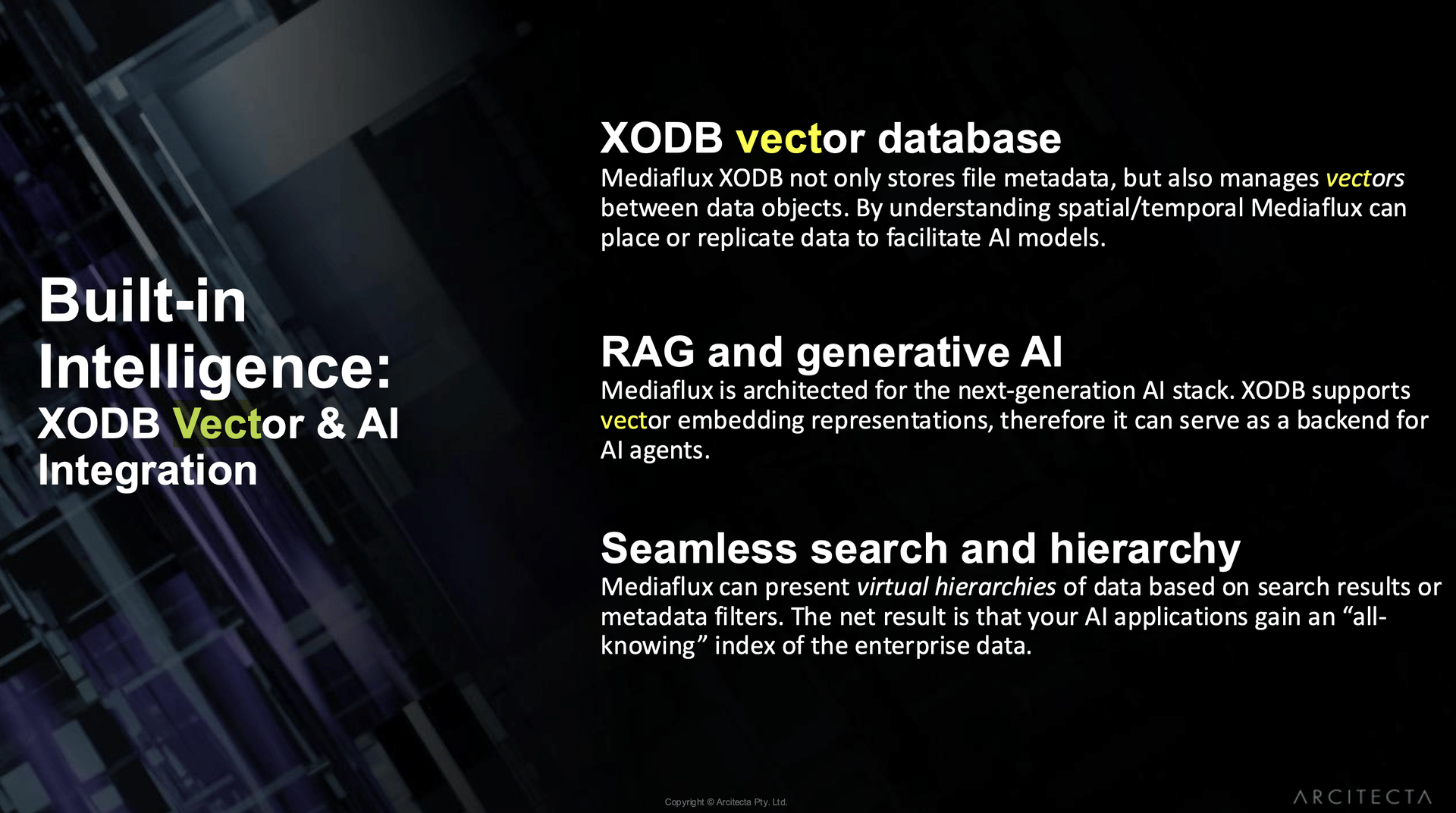

“We build everything we do,” Lohrey said. “We’re first-principles makers. We even write our own database.” That home-grown database, XODB, sits at the heart of Mediaflux, managing metadata and now vectors, which enable AI models and traditional storage systems to coexist within a single, coherent environment.

The Hidden Cost of Moving Data

Arcitecta’s core message is that data movement is expensive, both financially and environmentally. Every migration consumes energy, produces emissions, and can cross political borders where sovereignty laws apply.

Lohrey stated that the company has begun modeling the embodied energy associated with large-scale data migrations. “Every time someone migrates five to ten petabytes,” he said, “there’s a lot of embodied energy in that.” The speed of digital growth has left little time for reflection on its physical consequences.

This is where the idea of taking data to where compute and water are available becomes powerful. Data centers consume about 1.5 percent of global electricity, a figure that could double by the end of the decade, according to the International Energy Agency. Yet most hyperscale facilities are built in water-stressed regions, including Northern Virginia, Arizona, and parts of Europe.

Lohrey suggests flipping the traditional model. Instead of constructing new power-hungry facilities in deserts or drought zones, organizations could position compute near renewable energy and water sources, then move data there for processing.

It challenges the long-standing “compute-to-data” assumption and feels increasingly relevant as environmental limits collide with digital expansion.

Decoupling Storage and Compute

Eric Polet, Arcitecta’s Director of Product Marketing, explained that decoupling storage from compute has become one of the company’s main priorities.

“It used to be that wherever computing goes, data will follow. We’re breaking that rule.”

That shift is most visible in Mediaflux Burst, which allows organizations to use external compute capacity in the cloud or another data center without relocating entire datasets. For research institutions such as Princeton University and TU Dresden, this approach eases the tension between agility and cost. They can keep core data nearby yet burst into additional compute zones when experiments or AI training require more power.

In practical terms, the future of infrastructure will depend less on the location of a cloud region and more on the availability of energy, bandwidth, and water to support it.

The TU Dresden Example: Simplicity as a Competitive Edge

One of Arcitecta’s strongest case studies comes from Technische Universität Dresden, which needed to manage petabytes of research data while maintaining fluid collaboration across distributed teams.

Before adopting Mediaflux, TU Dresden faced familiar challenges: fragmented storage, uneven indexing, and difficulty recalling archived data. By integrating Mediaflux with GRAU Data XtreemStore and automating tiered archiving between disk and tape, the university turned data management into a strategic advantage.

Mediaflux simplified complex workflows, reduced costs, and made even tape-stored data searchable. The result was a system that could expand naturally while preserving collaboration, a balance rarely achieved in large-scale research environments.

The TU Dresden deployment also highlights the renewed role of tape. Once dismissed as outdated, it now provides a lower-energy, higher-density option for cold data, aligning perfectly with the sustainability priorities Lohrey promotes.

Princeton’s 100-Year Plan for Data Longevity

At Princeton University, the challenge is different but equally ambitious. The institution is designing a 100-year data management plan to ensure that researchers decades from now can still access the discoveries being made today.

To accomplish this, Princeton created TigerData, a platform built on top of Mediaflux that connects multiple storage vendors, including Dell and IBM. It manages over 200 petabytes of research data and uses IBM’s Diamondback tape systems for long-term preservation.

Eric Polet said Princeton’s team “were getting held hostage by one vendor and really getting the screws put to them on support.” Mediaflux offered a vendor-agnostic solution, enabling them to mix and match hardware without needing to rewrite workflows. “We can make it just appear as storage behind this,” he said. That adaptability reduced costs and created resilience across generations of technology change.

It illustrates a deeper form of sustainability, one focused on continuity and stewardship.

Data Sovereignty and the Arcitecta View

Operating from Australia while expanding in the United States and Europe gives Arcitecta an unusual perspective on the global debate over data sovereignty. Regions prioritize different risks: Australians focus on energy resilience and connectivity across vast distances, Europeans emphasize privacy and regulation, and Americans increasingly view data through the lenses of efficiency and cost.

Arcitecta’s technology bridges these differences through a single metadata layer that treats files in Frankfurt, Sydney, and Boston uniformly, while still respecting local policies. Lohrey summarized it: “Our job is to enable people to orchestrate and move data at scale, and to deal with large numbers of things. The more things you have, the greater the complexity and the greater the opportunity for insight.”

It captures both a technical principle and a human one. Data management is not only about controlling volume but also about understanding meaning without losing autonomy.

Throughout the discussion, Lohrey emphasized that Mediaflux was never meant to be a passive file system. It functions as an “operating system for metadata and data,” capable of recognizing who created a file, where it originated, how it has evolved, and whether it should remain active.

He noted that one customer generates over 70 million file events each month, each tracked in real-time. “We can tell exactly what was created, by whom, and from what vector,” he said. “That means we can identify what’s active, what’s dormant, and what can move to tape.”

This level of visibility is not about control, but rather about responsibility. It helps organizations understand their digital footprint and decide where energy, storage, and attention should be directed. It introduces a quiet form of accountability and a sense of ethics into how data is handled in the AI era.

From Metadata to Meaning: Building AI-Ready Infrastructure

The introduction of vector support to XODB marks Arcitecta’s entry into the age of AI. Lohrey made it clear that the company is not in the business of creating large language models but of ensuring that “we get data to the right AIs that are fit for particular purposes.”

This involves storing vector embeddings, numerical representations of content, alongside metadata. It allows enterprises to use retrieval-augmented generation and other AI-driven methods without fragmenting their information across incompatible systems.

Both the Dana-Farber Cancer Institute and the National Film and Sound Archive in Australia have applied these capabilities. Dana-Farber uses Mediaflux to standardize research data before feeding it into custom AI models for cancer research.

The Film and Sound Archive utilizes Wasabi Air integration to recognize faces, voices, and objects within its digital collections, enabling years of cultural material to be searchable within seconds.

For both organizations, AI is not about automation, but rather about discovery. Arcitecta’s focus is to make that discovery safe, sustainable, and contextual.

Where Data, Energy, and Humanity Meet

If Arcitecta’s technology connects data, its Datakamer initiative connects people. The first event in Boston earlier this year brought together researchers, IT professionals, and customers to share practical lessons in data stewardship. The company describes it as “a low-pressure learning event” focused on conversation rather than sales.

Future gatherings are planned for Europe, Australia, and Princeton University. This expansion signals Arcitecta’s growing global presence and its belief that community, not marketing, drives progress. The company grows not through license volume but through relationships, linking practitioners who want to make data work for humanity rather than the other way around.

As AI workloads expand and geopolitical strains shape supply chains, the world is rediscovering the physical nature of data. It requires water, consumes power, crosses borders, and challenges ethical boundaries of ownership and control.

Arcitecta’s perspective is practical. By virtualizing storage, separating compute from storage, and embedding intelligence at the right layers, the company demonstrates how responsible data management can be implemented in practice. Lohrey summarized it best: “We want to make it easier for people to identify patterns, to collaborate, and that collaboration includes systems as much as people.”

Arcitecta’s work feels grounded and tangible. It reminds us that the digital world still relies on real materials, bits stored in physical locations, cooled by real water, and powered by real energy. The future of computing may not belong to whoever builds the biggest data center, but to those who understand where the rivers still flow.

Over to You

I’ll be sitting down with Arcitecta for an upcoming podcast to dig deeper into this journey. What questions would you like me to put to them? Share your thoughts, and I’ll take them straight into the conversation.