At the IT Press Tour in Amsterdam, Dr. Michael Klemm, CEO of the OpenMP Architecture Review Board (ARB) and Principal Member of Technical Staff at AMD, shared OpenMP's origin story, its role in modern computing, and why, even after nearly three decades, it may matter now more than ever.

OpenMP began life in 1997. The computing world was moving away from proprietary systems and toward shared-memory multiprocessing. At the time, every hardware vendor had their own programming model. That meant researchers who wrote software for one system could not simply run it on another system. Rewriting code for every platform was slow, expensive, and in many cases impossible.

Dr. Klemm explained it. "Imagine you are a researcher writing a weather simulation. If you coded it for one vendor's system, you couldn't simply run it on another system. You would have to rewrite huge sections of code. That was not sustainable."

The solution was OpenMP. It created a vendor-neutral standard for parallel programming. Developers could use a common set of compiler directives, small annotations in their code, to tell the system how to parallelise work across multiple cores. Instead of hand-writing threading logic, they could rely on OpenMP to manage it.

It was a slight shift that solved a big problem. It freed developers from hardware lock-in and made it possible to scale software across different systems without having to rewrite it from scratch.

What OpenMP Actually Does

For non-specialists, OpenMP might sound abstract. So let's break it down.

Every modern computer has multiple cores. To use them effectively, applications need to split up work and run tasks in parallel. That is easier said than done. Without standards, every hardware vendor would force programmers to write custom, low-level code to handle threading, synchronisation, and memory sharing.

OpenMP provides a common way to do this. By adding simple directives, such as #pragma omp parallel in C, C++, or Fortran, developers can instruct the compiler to "this part of the program can run on multiple cores." The compiler and runtime handle the details.

It is like adding road signs for traffic. Instead of chaos at every intersection, you get consistent, predictable flows that work across different "roads" or hardware systems.

Dr. Klemm described it with a smile. "OpenMP is the plumber of high-performance computing. It makes sure everything flows, even if you do not see it."

The Road to OpenMP 6.0

Standards do not stand still. OpenMP has evolved steadily since its introduction, with regular updates to keep pace with hardware and programming needs.

The most recent version, OpenMP 6.0, represents a leap forward. It expands beyond CPUs to support accelerators like GPUs, FPGAs, and other specialised processors. This matters because modern computing is increasingly heterogeneous. A single system might combine CPUs, GPUs, and other accelerators, each of which is suited for different tasks.

Dr. Klemm emphasised this shift. "Our goal is to give developers one programming model that spans all of it. Whether you run on CPUs, GPUs, or new architectures we have not yet imagined, OpenMP should be there."

That vision speaks to a future where programmers can focus on solving problems rather than wrestling with the quirks of every piece of hardware.

The Politics of Open Standards

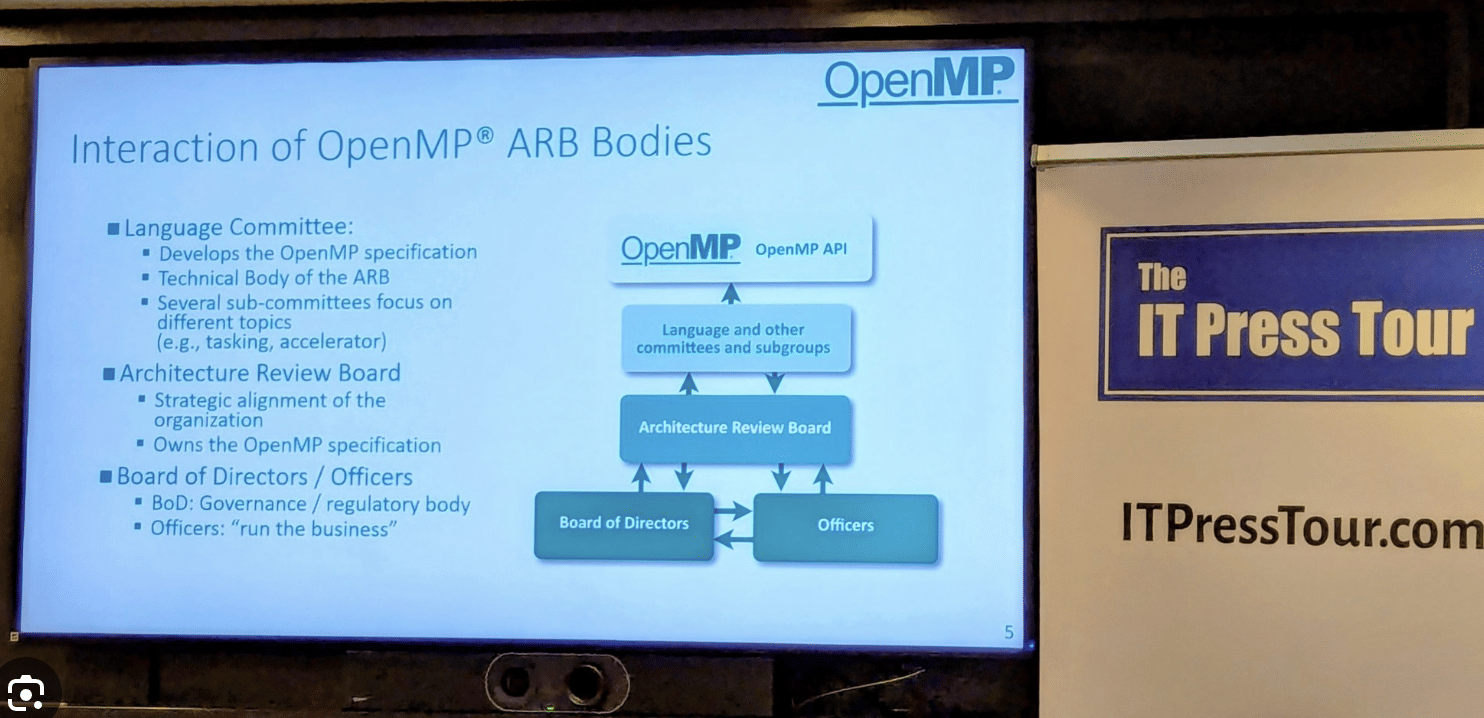

OpenMP is governed by the Architecture Review Board, which comprises representatives from companies such as AMD, Intel, NVIDIA, and ARM, as well as research institutions and universities.

This diversity is both a strength and a challenge. On the positive side, it ensures no single vendor controls the standard. It reflects real-world needs across industries and geographies. On the other hand, building consensus is a slow process. Every decision must balance competing interests, and the process of ratifying new features can take years.

There are also geopolitical constraints. For example, U.S. export control laws can affect how standards groups collaborate internationally. Dr. Klemm acknowledged these tensions but emphasized that the focus remains on maintaining OpenMP's openness, portability, and relevance.

Why Businesses Should Care

For years, OpenMP has been seen as a niche tool for high-performance computing. But the landscape has changed. Today, any business that touches AI, large-scale simulation, or data-intensive workflows is likely to benefit.

Consider cloud providers optimising energy usage, banks running risk models, or media companies transcoding video. All of these involve parallel processing across many cores or accelerators. OpenMP provides a stable, vendor-neutral approach to achieve this.

There is also an efficiency story. Rewriting code for every new architecture is not sustainable. Companies want to write once and deploy everywhere. OpenMP helps make that possible.

The timing is essential. AI has made parallelism mainstream. Training a large language model or running a real-time recommendation system involves spreading work across thousands of cores. At the same time, energy efficiency is becoming as important as raw speed.

My Takeaway from Amsterdam

OpenMP has endured multiple hardware revolutions, from the emergence of multicore CPUs to the proliferation of GPUs. Few standards can claim that. Yet the real story is accessibility.

Most businesses lack the time or budget to reinvent their software stacks for every new wave of hardware. By providing a portable and predictable model for parallel programming, OpenMP quietly reduces costs, accelerates adoption, and enables innovation in ways that most people never see.

In an era obsessed with shiny new AI models, it is worth remembering the plumbing. Without standards like OpenMP, the infrastructure underneath would be far more fragile.

Over to You

I will be sitting down with Dr. Michael Klemm for an upcoming podcast to continue this conversation. What would you like me to ask him? Should I press him on how OpenMP will integrate with AI frameworks, or whether accelerators like FPGAs will truly be supported at scale? What are the governance challenges of managing so many vendors in alignment?

Share your questions, and I will take them directly to him on the show.